|

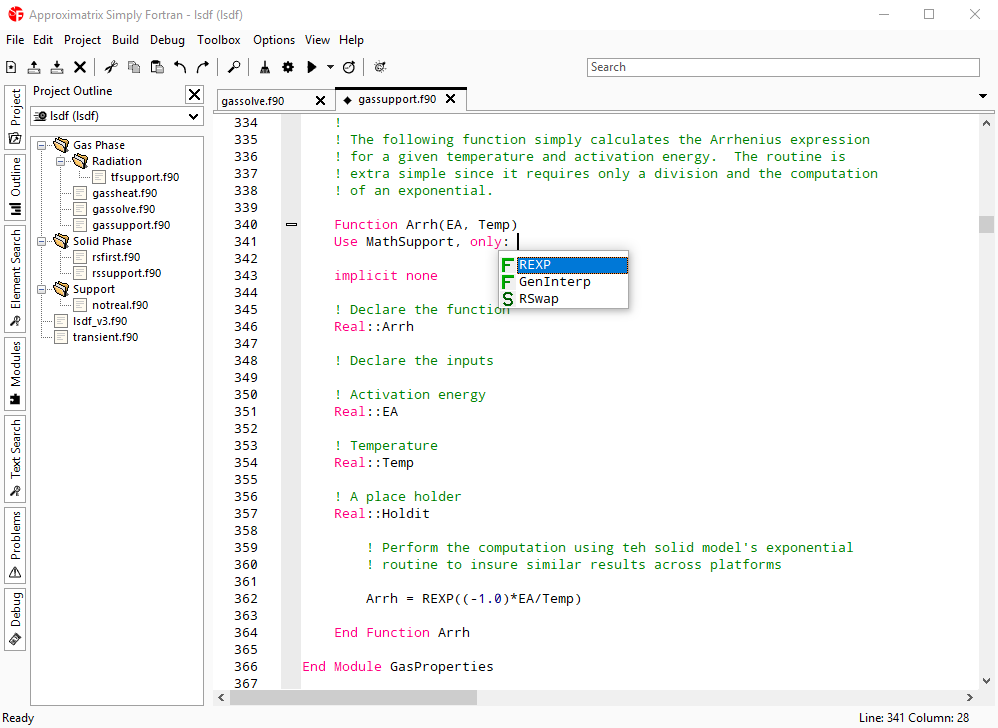

I don't get why you even mix in the talk about writing the kernel in "the host source code", it does not matter where the code is actually stored, it might be a separate file, in-line strings or separate sections. This also means the conversion of code to CUDA enforces less restrictions, and sometimes the code can be transfered directly (like in the example shown above). If you'd paid some attention, you would realize by now that CUDA's programming capabilities greatly surpasses those of OpenCL, which enables more complex operations in the GPU code. designed to run on the CPU, and then you can take parts of that code to be executed on the GPU with minimal effort, and almost makes the GPU acceleration "transparent" to the programmer. With CUDA you can write a normal program in C, C++, Fortran, etc.

But you were talking about the programming capabilities of the hardware (quoting you), how does that help here?If you'd paid some attention, you would realize by now that CUDA's programming capabilities greatly surpasses those of OpenCL, which enables more complex operations in the GPU code. Seriously, how is the kernel code shown here any different than the very same kernel written in OpenCL? Yes, in CUDA you can write the kernel in the host source code, and the compiler separates them, it's cool stuff.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed